Otman's not afraid of an attack on the mansion. He has a bunker.

OPEN BETS WILL WORK, PRIVATE PREPARATIONS WILL LOSE CONTROL。

In 2016, Sam Ottman built a bunker underground in Wyoming. 1,200 m2, three-storey structure, 500 kg of gold, 5,000 pieces of potassium iodide, 5 tons of frozen dry food, 100,000 rounds of ammunition. That year, OpenAI just started its first anniversary。

TEN YEARS LATER, THE HEAD OF THE WORLD'S MOST POWERFUL AI COMPANY WAS ATTACKED OVER TWO WEEKENDS, FIRST INCENDIARY BOTTLES AND THEN IN SHOOTING. IN HIS BLOG, HE SAYS THAT HE SERIOUSLY UNDERESTIMATES THE POWER OF NARRATIVE. WAS HE TALKING ABOUT SOMEONE ELSE, OR HIS OWN

48 hours, two attacks

April 10th, 3:40 a.m. Chesnat Street, San Francisco. A 20-year-old man, Daniel Moreno-Gama, threw a burning bottle at the metal door of Sam Otman's apartment. The fire set fire to the outside door and he fled. About an hour later, the same person appeared near the office of OpenAI San Francisco, where he continued to be threatened with arson and was arrested. The charges included attempted murder and arson。

Sam Otman's San Francisco residence and surveillance footage of arson suspects

Two days later, on 12 April, at 1.40 a.m., a Honda car was parked next to another residence in Otman, on another Russian hill. The passengers in the vehicle raised their hands out of the window and fired a shot at the residence. The surveillance video recorded the licence plate and the police subsequently arrested two persons: Amanda Tom (25 years old) and Muhamad Tarik Hussein (23 years old). Three guns were found during the search of the house, two of whom were charged with negligent shooting。

One weekend, two attacks。

The suspect in the first case, Daniel Moreno-Gama, is an AI pessimist. In his social media, he quoted the theme of the Dune’s human struggle against the machine, writing about the risk that AI’s non-conformity poses to exist, and criticized the leaders of technology for ‘showing the fate of humanity’ in pursuit of “superhumanism”。

What's his argument

Over the past five years, one of the standard actions for OpenAI to construct the AI narrative has been to repeatedly highlight how real the AGI threat is. To get the government to take regulation seriously, to get investors to understand how big this bet is, and to make the whole industry aware of the competition. It has its function, and it makes OpenAI stand up on three things: the front line is the most dangerous, we are the most responsible, so money should be given。

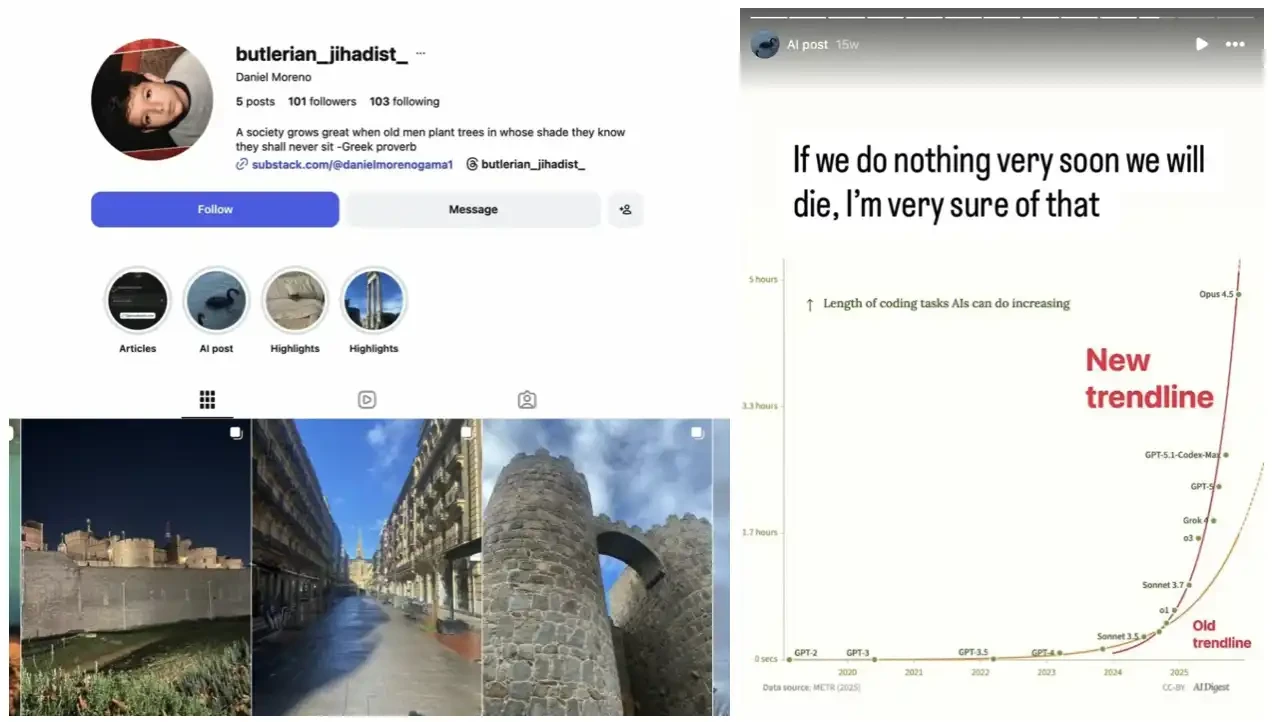

But the phrase "this is the most dangerous technology in human history" will not stop at the circles of technology and investors. It will be directed downwards, and it will be literally operational instructions from some people. Moreno-Gama wrote in the ins share that "Improgramic progress plus mismatch failure is the risk of survival". The original source of this validation framework is the mainstream literature of AI security research, many of which are funded or endorsed by OpenAI。

Daniel Moreno-Gama social media account

After the first attack, Otmann wrote on his blog. He posted a photograph of the child, which he hoped would prevent the next person from throwing a burning bottle at his house. He acknowledged the "legitimate moral position" of the opponents and called for public discussion to be "a little less explosive in both word and metaphorical terms"。

HE IS ALSO RESPONDING TO AN IN-DEPTH NEW YORKER REPORT. THE ARTICLE WAS PUBLISHED A FEW DAYS BEFORE THE ATTACK AND PUBLICLY QUESTIONED HIS CREDIBILITY AS THE SUPREME AUTHORITY OF AI. HE WROTE, "I HAVE GREATLY UNDERESTIMATED THE POWER OF PUBLIC OPINION AND SPEECH."

Two days later, his home was shot at again。

The security budget is a statement. The bunker is another

The beginning of this trajectory is one year ahead of what most people realize。

New York, 4 December 2024. United Healthcare CEO Brian Thompson was shot dead outside the Hilton Hotel. The suspect, Luigi Mangione, graduated from the Ivy League School, leaving a handwritten statement critical of the health insurance industry. The case triggered an unusual wave of reactions in the social media: a large number of ordinary users publicly expressed sympathy for the killer and even made him some sort of protest symbol。

At that moment, some doors were pushed。

After Thompson, the matter of executive security went from welfare to survival needs. According to the findings of the Fortune magazine, there has been a 225 per cent increase since 2023 in the number of attacks on persons committed against executives of large enterprises. Of the 500 component companies, 33.8 per cent reported executive security expenditures in the financial statements in 2025 and 23.3 per cent in 2020. The median cost for companies providing security services was $130,000, an increase of 20 per cent over the same period and a doubling in five years。

AI INDUSTRY IS THE MOST RECENT AND VISIBLE RECIPIENT OF THIS TREND. THE TEN MAJOR TECHNOLOGY GIANTS, CEO 2024, SPENT MORE THAN $45 MILLION ON SECURITY. ZUCKERBERG ALONE IS OVER $27 MILLION, HIGHER THAN THE SUM OF FOUR CEOS LIKE APPLE AND GOOGLE. YOUNG WEIDA WONG IN-HOON WAS $3.5 MILLION IN 2025, AN INCREASE OF 59 PER CENT OVER THE SAME PERIOD. GOOGLE PICHAI IS $8.27 MILLION, AN INCREASE OF 22 PER CENT。

AI HAS SOMETHING THAT INDIVIDUAL INDUSTRIES DO NOT HAVE, THAT EVEN THE BUILDERS THEMSELVES BELIEVE THAT THIS TECHNOLOGY COULD DESTROY CIVILIZATION. THE PEW RESEARCH CENTRE SURVEYED 28,333 RESPONDENTS WORLDWIDE IN 2025, ONLY 16% WERE EXCITED ABOUT AI DEVELOPMENT AND 34% WERE CONCERNED. THE COUNTERINTUITIVE FINDING IS THAT THE HIGHER THE LEVEL OF EDUCATION AND INCOME, THE STRONGER THE FEAR OF AI GETTING OUT OF CONTROL. MOST PEOPLE KNOW IT, MOST AFRAID OF IT。

Shortly before, Ron Gibson, a member of the Indianapolis council, was shot 13 times in the middle of the night by gunmen and his 8-year-old son was woken by gunfire. There was a handwritten note at the door, "No data centres allowed." The FBI has been involved in the investigation. Jordyn Abrams, a researcher at George Washington University's Extremism Research Project, noted that data centres were becoming targets of anti-technology, anti-government extremists。

Ron Gibson, shooting scene

This fear is not a secret within the industry, but is not spoken out。

Ottoman built that bunker in Wyoming in 2016. That year, OpenAI had just been inaugurated and was showing the world how AI would benefit humanity. The two things were set up, and he said on stage that AI was the greatest opportunity for humanity to stockpile in private enough ammunition to support an armed militia。

IT'S A RATIONAL DOUBLE BET: OPEN BET AI WILL SUCCEED, PRIVATELY PREPARE AI WILL LOSE CONTROL。

Otman's swinger

On February 27, this year, OpenAI signed a contract with the United States Department of Defense to allow the Pentagon to deploy ChatGPT to a classified defence network, covering "any legitimate use". On the same day, Otmann publicly agreed with Anthropic ' s position on the restriction of AI ' s military applications. Subsequently, the one-day load of ChatGPT surged by 295 per cent, increasing by 775 per cent within 24 hours of a single-star evaluation. The QuitGPT boycott campaign reportedly brought together more than 1.5 million people。

On March 21, around 200 protesters marched in San Francisco across Anthropic, OpenAI and XAI, demanding that three CEOs commit to suspending the development of the front line AI. During the same period, London witnessed the largest anti-AI march to date。

Otman's Wyoming bunker and the security he employs are aimed at two different risks, one from outside and the other from what he is building. He took both risks seriously in private and admitted only one in public。

The same week as the first attack, the New Yorker published an in-depth report on Otmann. Journalists Ronan Farrow and Andrew Marantz interviewed over 100 informants, and the core argument was only two words: untrustworthy. The report quotes a former member of the OpenAI board who calls Otmann an "antisocial personality" and "unbound by the truth". Several former colleagues described his repeated rewording of his position on AI security and redefinition of power structures where necessary。

IN RESPONSE, OTTMAN ACKNOWLEDGED HIS TENDENCY TO "SHUN THE CONFLICT". OTMAN CREATED A PUBLIC NARRATIVE OF "AI AS A LEVEL THREAT" AS A TOOL FOR FUND-RAISING AND MONITORING GAMES. AS A RESULT, THE TOOL FLEW OUT OF HIS HAND, CIRCLED AROUND AND SMASHED BACK INTO HIS DOOR。